Deepfake Detection: Neural Networks Are Fighting Themselves

Human imagination is a tricky thing. Hey, “If you can dream it, you can do it,” right? Well, sci-fi writers and visionaries have been imagining this for decades, and here we are. Somewhat confused children, not really sure if the Santa in front of us is real. His beard looks white, the belly’s there, the presents, and he looks kind enough… But can we trust our eyes, or ears, for that matter?

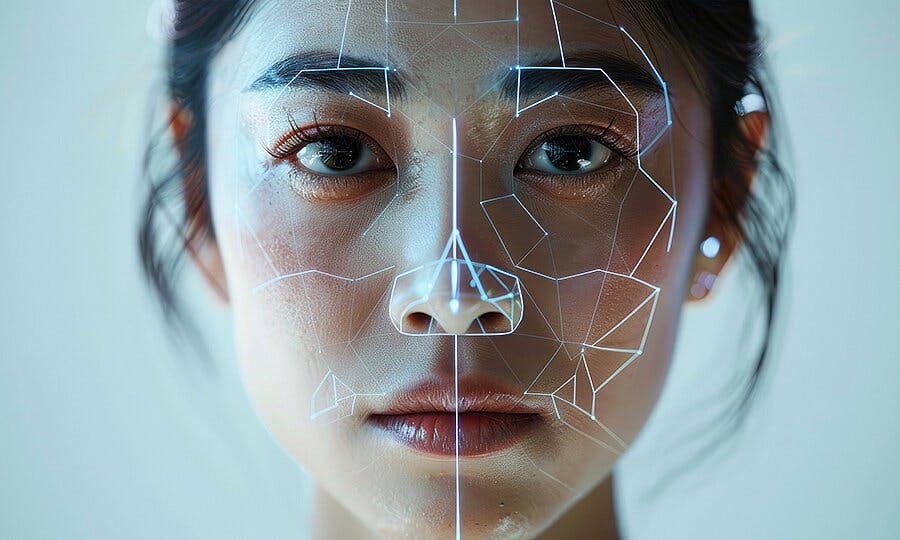

Every day, I work to make sure neural networks serve us — in gaming, in blockchain, and beyond. But the reality is, we already live in a world where these same networks can steal your face, your voice, even your reputation. And what’s even more frightening? It’s almost impossible to tell these fakes apart from the real thing.

Deepfakes are very real, and this is not a “Black Mirror” episode. We can go as far as to recreate and duplicate people digitally, in a virtually untraceable manner. Ironically, what we need to fight deepfakes are other AI agents.

Fighting Fire With Fire, Or The Ironic Loop Of AI Realism

==By 2025, deepfake realism has reached a whole new level.==The arms race between AI generators and detectors has become a key trust crisis, with every advance in generation outpacing our best defenses.

Cutting-edge diffusion models and text-to-video tools (think Sora clones, HeyGen, or D-ID) now make it easy to generate ultra-realistic fake videos from just a prompt. This tech has already seeped into unexpected places like Web3: scammers have used deepfakes of crypto founders to push fake token sales, and even game trailers can be forged to hype up nonexistent projects.

Meanwhile, Big Tech scrambles to keep up. Google’s

But the problem is stark: we train AI to unmask AI, but by the time it learns, another model is already better at lying. It’s a game of cat-and-mouse — one where our sense of reality is the playground. In the age of deepfakes, the big question isn’t whether you can trust what you see, but how long you can trust your eyes at all.

Detection ≠ Solution. We Need Faster Learning

Deepfake detection isn’t as simple as spotting one obvious glitch. A convincing fake is a complex blend of subtle factors: eye movement, facial micro-expressions, skin texture, and even tiny mismatches between lip sync and audio. Each layer of realism makes detection exponentially harder.

There’s no such thing as a universal deepfake detector — each AI model is only as good as the dataset it was trained on. For example, Meta’s AI Image Inspector is tuned for images, but it might miss video or audio fakes made with advanced diffusion models like Runway Gen-3 or Sora.

But even these watermarks can be cropped or altered. The takeaway? Spotting deepfakes is not about catching a single flaw — it’s about piecing together dozens of clues and proving authenticity before fakes can go viral. In this AI arms race, metadata might be our last line of defense against a world where we trust nothing and doubt everything.

No Deepfake Shall Pass. How Companies Keep the Fakes Out

When deepfakes can pop up anywhere, from fake AMA videos to bogus game trailers, companies are forced to play digital bodyguards 24/7. Many studios and Web3 projects now screen user-generated content (UGC) from players and influencers, double-checking every clip for signs of AI tampering.

Some go further in playing deepfake goalie, combining AI detectors with blockchain-backed origin tags, such as C2PA (Coalition for Content Provenance and Authenticity). A few NFT projects even issue “authenticity certificates” for in-game assets, staking their reputations on transparency.

Verification isn’t just for assets. Studios now authenticate official voiceovers and video statements for big announcements, trailers, and live AMAs to stop deepfake PR disasters before they happen.

Companies like Adobe, Microsoft, and Sony have joined the C2PA initiative to push for industry-wide standards, ensuring creators and players alike can trust that “this content is the real deal.”

However, it’s not a silver bullet. Watermarks can be removed, and detection can fail. That said, layered defenses are our best bet against an environment where trust is constantly under siege. In this game, the message is simple: if your community knows the source, they can believe what they see.

Future-Proofing Our Eyes: Trust, But Verify — Then Verify Again

The future of deepfakes isn’t just about better tech. It’s about redefining trust itself. By 2026, AI-generated content is poised to dominate the online landscape and disinformation. Analysts from OODA Loop and others predict that by 2026, as much as 90% of online content may be synthetically generated by AI.

“On a daily basis, people trust their own perception to guide them and tell them what is real and what is not,” researchers continued. “Auditory and visual recordings of an event are often treated as a truthful account of an event.

As synthetic media becomes cheaper and easier to make, with text-to-video models like Sora or Runway improving every month, our defense won’t be to ban fakes entirely (that’s impossible). Instead, we’ll need a whole new culture of digital verification. Just like we once learned to spot phishing emails or fake news headlines, we’ll need to verify videos, voices, and “proof” before we share them.

Some startups are building browser plug-ins that flag suspected deepfakes in real time. Major platforms may soon adopt default content provenance tags, so users know where every clip comes from. The real shift isn’t technical; it’s social.

We must teach the public to pause and ask: “Who made this? How do I know?” As one researcher put it, “We can’t stop deepfakes. But we can help the world spot them faster than they can be weaponized.” In the age of AI illusions, reasonable doubt might be our greatest shield.

There’s a lot to be said in conclusion.

Deepfakes aren’t sci-fi anymore; they’re today’s reality. As AI generation tools become more powerful, the responsibility to protect trust falls on companies as well as users. Yes, those who build these neural networks must lead the way with robust safeguards, transparent provenance tools, and universal standards. But users, too, must stay vigilant and demand accountability. If we want a future where we can trust our eyes again, it’s up to everyone — creators, companies, and communities — to make authenticity the default.

After all, if you’re building the future, make sure it’s one where truth still stands a chance.

Featured image source here.